ROS 2 Robotic Systems Threat Model

Authors: Thomas Moulard, Juan Hortala Xabi Perez Gorka Olalde Borja Erice Odei Olalde David Mayoral

Date Written: 2019-03

Last Modified: 2021-01

This is a DRAFT DOCUMENT.

Disclaimer:

- This document is not exhaustive. Mitigating all attacks in this document does not ensure any robotic product is secure.

- This document is a live document. It will continue to evolve as we implement and mitigate attacks against reference platforms.

Table of Contents

- ROS 2 Robotic Systems Threat Model

- Table of Contents

- Document Scope

- Robotic Systems Threats Overview

- Threat Analysis for the

TurtleBot 3Robotic Platform - Threat Analysis for the

MARARobotic Platform - References

Document Scope

This document describes potential threats for ROS 2 robotic systems. The document is divided into two parts:

- Robotic Systems Threats Overview

- Threat Analysis for the TurtleBot 3 Robotic Platform

- Threat Analysis for the MARA Robotic Platform

The first section lists and describes threats from a theoretical point of view. Explanations in this section should hold for any robot built using a component-oriented architecture. The second section instantiates those threats on a widely-available reference platform, the TurtleBot 3. Mitigating threats on this platform enables us to demonstrate the viability of our recommendations.

Robotic Systems Threats Overview

This section is intentionally independent from ROS as robotic systems share common threats and potential vulnerabilities. For instance, this section describes “robotic components” while the next section will mention “ROS 2 nodes”.

Defining Robotic Systems Threats

We will consider as a robotic system one or more general-purpose computers connected to one or more actuators or sensors. An actuator is defined as any device producing physical motion. A sensor is defined as any device capturing or recording a physical property.

Robot Application Actors, Assets, and Entry Points

This section defines actors, assets, and entry points for this threat model.

Actors are humans or external systems interacting with the robot. Considering which actors interact with the robot is helpful to determine how the system can be compromised. For instance, actors may be able to give commands to the robot which may be abused to attack the system.

Assets represent any user, resource (e.g. disk space), or property (e.g. physical safety of users) of the system that should be defended against attackers. Properties of assets can be related to achieving the business goals of the robot. For example, sensor data is a resource/asset of the system and the privacy of that data is a system property and a business goal.

Entry points represent how the system is interacting with the world (communication channels, API, sensors, etc.).

Robot Application Actors

Actors are divided into multiple categories based on whether or not they are physically present next to the robot (could the robot harm them?), are they human or not and are they a “power user” or not. A power user is defined as someone who is knowledgeable and executes tasks which are normally not done by end-users (build and debug new software, deploy code, etc.).

| Actor | Co-Located? | Human? | Power User? | Notes |

|---|---|---|---|---|

| Robot User | Y | Y | N | Human interacting physically with the robot. |

| Robot Developer / Power User | Y | Y | Y | User with robot administrative access or developer. |

| Third-Party Robotic System | Y | N | - | Another robot or system capable of physical interaction with the robot. |

| Teleoperator / Remote User | N | Y | N | A human tele-operating the robot or sending commands to it through a client application (e.g. smartphone app) |

| Cloud Developer | N | Y | Y | A developer building a cloud service connected to the robot or an analyst who has been granted access to robot data. |

| Cloud Service | N | N | - | A service sending commands to the robot automatically (e.g. cloud motion planning service) |

Assets

Assets are categorized in privacy (robot private data should not be accessible by attackers), integrity (robot behavior should not be modified by attacks) and availability (robot should continue to operate even under attack).

| Asset | Description |

|---|---|

| Privacy | |

| Sensor Data Privacy | Sensor data must not be accessed by unauthorized actors. |

| Robot Data Stores Privacy | Robot persistent data (logs, software, etc.) must not be accessible by unauthorized actors. |

| Integrity | |

| Physical Safety | The robotic system must not harm its users or environment. |

| Robot Integrity | The robotic system must not damage itself. |

| Robot Actuators Command Integrity | Unallowed actors should not be able to control the robot actuators. |

| Robot Behavior Integrity | The robotic system must not allow attackers to disrupt its tasks. |

| Robot Data Stores Integrity | No attacker should be able to alter robot data. |

| Availability | |

| Compute Capabilities | Robot embedded and distributed (e.g. cloud) compute resources. Starving a robot from its compute resources can prevent it from operating correctly. |

| Robot Availability | The robotic system must answer commands in a reasonable time. |

| Sensor Availability | Sensor data must be available to allowed actors shortly after being produced. |

Entry Points

Entry points describe the system attack surface area (how do actors interact with the system?).

| Name | Description |

|---|---|

| Robot Components Communication Channels | Robotic applications are generally composed of multiple components talking over a shared bus. This bus may be accessible over the robot WAN link. |

| Robot Administration Tools | Tools allowing local or remote users to connect to the robot computers directly (e.g. SSH, VNC). |

| Remote Application Interface | Remote applications (cloud, smartphone application, etc.) can be used to read robot data or send robot commands (e.g. cloud REST API, desktop GUI, smartphone application). |

| Robot Code Deployment Infrastructure | Deployment infrastructure for binaries or configuration files are granted read/write access to the robot computer's filesystems. |

| Sensors | Sensors are capturing data which usually end up being injected into the robot middleware communication channels. |

| Embedded Computer Physical Access | External (HDMI, USB...) and internal (PCI Express, SATA...) ports. |

Robot Application Components and Trust Boundaries

The system is divided into hardware (embedded general-purpose computer, sensors, actuators), multiple components (usually processes) running on multiple computers (trusted or non-trusted components) and data stores (embedded or in the cloud).

While the computers may run well-controlled, trusted software (trusted components), other off-the-shelf robotics components (non-trusted) nodes may be included in the application. Third-party components may be malicious (extract private data, install a root-kit, etc.) or their QA validation process may not be as extensive as in-house software. Third-party components releasing process create additional security threats (third-party component may be compromised during their distribution).

A trusted robotic component is defined as a node developed, built, tested and deployed by the robotic application owner or vetted partners. As the process is owned end-to-end by a single organization, we can assume that the node will respect its specifications and will not, for instance, try to extract and leak private information. While carefully controlled engineering processes can reduce the risk of malicious behavior (accidentally or voluntarily), it cannot completely eliminate it. Trusted nodes can still leak private data, etc.

Trusted nodes should not trust non-trusted nodes. It is likely that more than one non-trusted component is embedded in any given robotic application. It is important for non-trusted components to not trust each other as one malicious non-trusted node may try to compromise another non-trusted node.

An example of a trusted component could be an in-house (or carefully vetted) IMU driver node. This component may communicate through unsafe channels with other driver nodes to reduce sensor data fusion latency. Trusting components is never ideal but it may be acceptable if the software is well-controlled.

On the opposite, a non-trusted node can be a third-party object tracker. Deploying this node without adequate sandboxing could impact:

- User privacy: the node is streaming back user video without their consent

- User safety: the robot is following the object detected by the tracker and its speed is proportional to the object distance. The malicious tracker estimates the object position very far away on purpose to trick the robot into suddenly accelerating and hurting the user.

- System availability: the node may try to consume all available computing resources (CPU, memory, disk) and prevent the robot from performing correctly.

- System Integrity: the robot is following the object detected by the tracker. The attacker can tele-operate the robot by controlling the estimated position of the tracked object (detect an object on the left to make the robot move to the left, etc.).

Nodes may also communicate with the local filesystem, cloud services or data stores. Those services or data stores can be compromised and should not be automatically trusted. For instance, URDF robot models are usually stored in the robot file system. This model stores robot joint limits. If the robot file system is compromised, those limits could be removed which would enable an attacker to destroy the robot.

Finally, users may try to rely on sensors to inject malicious data into the system (Akhtar, Naveed, and Ajmal Mian. “Threat of Adversarial Attacks on Deep Learning in Computer Vision: A Survey.”).

The diagram below illustrates an example application with different trust zones (trust boundaries showed with dashed green lines). The number and scope of trust zones is depending on the application.

Diagram Source

(edited with Threat Dragon)

Diagram Source

(edited with Threat Dragon)

Threat Analysis and Modeling

The table below lists all generic threats which may impact a robotic application.

Threat categorization is based on the STRIDE (Spoofing / Tampering / Repudiation / Integrity / Denial of service / Elevation of privileges) model. Risk assessment relies on DREAD (Damage / Reproducibility / Exploitability / Affected users / Discoverability).

In the following table, the “Threat Category (STRIDE)” columns indicate the categories to which a threat belongs. If the “Spoofing” column is marked with a check sign (✓), it means that this threat can be used to spoof a component of the system. If it cannot be used to spoof a component, a cross sign will be present instead (✘).

The “Threat Risk Assessment (DREAD)” columns contain a score indicating how easy or likely it is for a particular threat to be exploited. The allowed score values are 1 (not at risk), 2 (may be at risk) or 3 (at risk, needs to be mitigated). For instance, in the damage column a 1 would mean “exploitation of the threat would cause minimum damages”, 2 “exploitation of the threat would cause significant damages” and 3 “exploitation of the threat would cause massive damages”. The “total score” is computed by adding the score of each column. The higher the score, the more critical the threat.

Impacted assets, entry points and business goals columns indicate whether an asset, entry point or business goal is impacted by a given threat. A check sign (✓) means impacted, a cross sign (✘) means not impacted. A triangle (▲) means “impacted indirectly or under certain conditions”. For instance, compromising the robot kernel may not be enough to steal user data but it makes stealing data much easier.

| Threat Description | Threat Category (STRIDE) | Threat Risk Assessment (DREAD) | Impacted Assets | Impacted Entry Points | Mitigation Strategies | Similar Attacks in the Litterature | ||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Spoofing | Tampering | Repudiation | Info. Disclosure | Denial of Service | Elev. of Privileges | Damage | Reproducibility | Exploitability | Affected Users | Discoverability | DREAD Score | Robot Compute Rsc. | Physical Safety | Robot Avail. | Robot Integrity | Data Integrity | Data Avail. | Data Privacy | Embedded H/W | Robot Comm. Channels | Robot Admin. Tools | Remote App. Interface | Deployment Infra. | |||||

| Embedded / Software / Communication / Inter-Component Communication | ||||||||||||||||||||||||||||

| An attacker spoofs a component identity. | ✓ | ✓ | ✘ | ✓ | ✘ | ✓ | 3 | 1 | 1 | 2 | 3 | 10 | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| An attacker intercepts and alters a message. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 3 | 3 | 3 | 15 | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | ▲ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| An attacker writes to a communication channel without authorization. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 3 | 3 | 3 | 15 | ✘ | ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| An attacker listens to a communication channel without authorization. | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | 2 | 3 | 3 | 3 | 3 | 14 | ✘ | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| An attacker prevents a communication channel from being usable. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 3 | 3 | 3 | 3 | 3 | 15 | ✓ | ▲ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| Embedded / Software / Communication / Long-Range Communication (e.g. WiFi, Cellular Connection) | ||||||||||||||||||||||||||||

| An attacker hijacks robot long-range communication | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 2 | 1 | 3 | 1 | 10 | ✘ | ✓ | ▲ | ✓ | ✓ | ✘ | ✓ | ✘ | ✓ | ✓ | ✓ | ✓ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| An attacker intercepts robot long-range communications (e.g. MitM) | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | 1 | 2 | 1 | 3 | 1 | 8 | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✓ | ✓ | ✓ | ✓ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| An attacker disrupts (e.g. jams) robot long-range communication channels. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 2 | 2 | 1 | 1 | 3 | 9 | ✘ | ▲ | ✓ | ✘ | ✘ | ✓ | ✘ | ✘ | ✓ | ✓ | ✓ | ✓ |

|

Bonaci, Tamara, Jeffrey Herron, Tariq Yusuf, Junjie Yan, Tadayoshi Kohno, and Howard Jay Chizeck. “To Make a Robot Secure: An Experimental Analysis of Cyber Security Threats Against Teleoperated Surgical Robots.” ArXiv:1504.04339 [Cs], April 16, 2015. | ||

| Embedded / Software / Communication / Short-Range Communication (e.g. Bluetooth) | ||||||||||||||||||||||||||||

| An attacker executes arbitrary code using a short-range communication protocol vulnerability. | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | 3 | 2 | 1 | 1 | 3 | 10 | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Checkoway, Stephen, Damon McCoy, Brian Kantor, Danny Anderson, Hovav Shacham, Stefan Savage, Karl Koscher, Alexei Czeskis, Franziska Roesner, and Tadayoshi Kohno. “Comprehensive Experimental Analyses of Automotive Attack Surfaces.” In Proceedings of the 20th USENIX Conference on Security, 6–6. SEC’11. Berkeley, CA, USA: USENIX Association, 2011. | ||

| Embedded / Software / Communication / Remote Application Interface | ||||||||||||||||||||||||||||

| An attacker gains unauthenticated access to the remote application interface. | ✓ | ✓ | ✘ | ✓ | ✓ | ▲ | 3 | 3 | 1 | 1 | 3 | 11 | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ | ✓ | ✓ | ✓ |

|

|||

| An attacker could eavesdrop communications to the Robot’s remote application interface. | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | 1 | 1 | 1 | 1 | 3 | 7 | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| An attacker could alter data sent to the Robot’s remote application interface. | ✓ | ✓ | ✘ | ✓ | ✓ | ▲ | 3 | 3 | 1 | 1 | 3 | 11 | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ | ✓ | ✓ | ✓ |

|

|||

| Embedded / Software / OS & Kernel | ||||||||||||||||||||||||||||

| An attacker compromises the real-time clock to disrupt the kernel RT scheduling guarantees. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 3 | 2 | 1 | 3 | 2 | 11 | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Dessiatnikoff, Anthony, Yves Deswarte, Eric Alata, and Vincent Nicomette. “Potential Attacks on Onboard Aerospace Systems.” IEEE Security & Privacy 10, no. 4 (July 2012): 71–74. | ||

| An attacker compromises the OS or kernel to alter robot data. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 2 | 1 | 3 | 2 | 11 | ✘ | ✘ | ✘ | ✓ | ✓ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Clark, George W., Michael V. Doran, and Todd R. Andel. “Cybersecurity Issues in Robotics.” In 2017 IEEE Conference on Cognitive and Computational Aspects of Situation Management (CogSIMA), 1–5. Savannah, GA, USA: IEEE, 2017. | ||

| An attacker compromises the OS or kernel to eavesdrop on robot data. | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | 1 | 2 | 1 | 3 | 2 | 9 | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Clark, George W., Michael V. Doran, and Todd R. Andel. “Cybersecurity Issues in Robotics.” In 2017 IEEE Conference on Cognitive and Computational Aspects of Situation Management (CogSIMA), 1–5. Savannah, GA, USA: IEEE, 2017. | ||

| An attacker gains access to the robot OS through its administration interface. | ✘ | ✓ | ✓ | ✓ | ✘ | ✘ | 3 | 3 | 2 | 3 | 3 | 14 | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| Embedded / Software / Component-Oriented Architecture | ||||||||||||||||||||||||||||

| A node accidentally writes incorrect data to a communication channel. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 2 | 3 | 2 | 3 | 3 | 13 | ✘ | ▲ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Jacques-Louis Lions et al. "Ariane S Flight 501 Failure." ESA Press Release 33–96, Paris, 1996. | ||

| An attacker deploys a malicious component on the robot. | ✘ | ✓ | ✘ | ✓ | ✘ | ✘ | 3 | 3 | 2 | 3 | 3 | 14 | ✘ | ▲ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Checkoway, Stephen, Damon McCoy, Brian Kantor, Danny Anderson, Hovav Shacham, Stefan Savage, Karl Koscher, Alexei Czeskis, Franziska Roesner, and Tadayoshi Kohno. “Comprehensive Experimental Analyses of Automotive Attack Surfaces.” In Proceedings of the 20th USENIX Conference on Security, 6–6. SEC’11. Berkeley, CA, USA: USENIX Association, 2011. | ||

| An attacker can prevent a component running on the robot from executing normally. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 2 | 3 | 2 | 3 | 3 | 13 | ✘ | ▲ | ✓ | ✘ | ✘ | ✓ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ |

|

Dessiatnikoff, Anthony, Yves Deswarte, Eric Alata, and Vincent Nicomette. “Potential Attacks on Onboard Aerospace Systems.” IEEE Security & Privacy 10, no. 4 (July 2012): 71–74. | ||

| Embedded / Software / Configuration Management | ||||||||||||||||||||||||||||

| An attacker modifies configuration values without authorization. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 3 | 3 | 3 | 15 | ✘ | ▲ | ✓ | ✓ | ✓ | ▲ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Ahmad Yousef, Khalil, Anas AlMajali, Salah Ghalyon, Waleed Dweik, and Bassam Mohd. “Analyzing Cyber-Physical Threats on Robotic Platforms.” Sensors 18, no. 5 (May 21, 2018): 1643. | ||

| An attacker accesses configuration values without authorization. | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | 1 | 3 | 3 | 3 | 3 | 13 | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Ahmad Yousef, Khalil, Anas AlMajali, Salah Ghalyon, Waleed Dweik, and Bassam Mohd. “Analyzing Cyber-Physical Threats on Robotic Platforms.” Sensors 18, no. 5 (May 21, 2018): 1643. | ||

| A user accidentally misconfigures the robot. | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 3 | 3 | 3 | 15 | ✘ | ▲ | ✓ | ✓ | ✓ | ▲ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| Embedded / Software / Data Storage (File System) | ||||||||||||||||||||||||||||

| An attacker modifies the robot file system by physically accessing it. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 3 | 3 | 3 | 15 | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| An attacker eavesdrops on the robot file system by physically accessing it. | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | 1 | 3 | 3 | 3 | 3 | 13 | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| An attacker saturates the robot disk with data. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 3 | 3 | 1 | 3 | 3 | 13 | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ |

|

|||

| Embedded / Software / Logs | ||||||||||||||||||||||||||||

| An attacker exfiltrates log data to a remote server. | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | 2 | 2 | 2 | 3 | 3 | 12 | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| Embedded / Hardware / Sensors | ||||||||||||||||||||||||||||

| An attacker spoofs a robot sensor (by e.g. replacing the sensor itself or manipulating the bus). | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | 3 | 2 | 1 | 3 | 3 | 12 | ✘ | ✘ | ✘ | ✓ | ✓ | ✘ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ |

|

|||

| Embedded / Hardware / Actuators | ||||||||||||||||||||||||||||

| An attacker spoofs a robot actuator. | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | 1 | 2 | 1 | 3 | 3 | 10 | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ |

|

|||

| An attacker modifies the command sent to the robot actuators. (intercept & retransmit) | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 2 | 1 | 3 | 3 | 12 | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✓ | ✘ |

|

|||

| An attacker intercepts the robot actuators command (can recompute localization). | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | 1 | 2 | 1 | 3 | 3 | 10 | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ |

|

|||

| An attacker sends malicious command to actuators to trigger the E-Stop | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 2 | 2 | 3 | 3 | 1 | 11 | ✘ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

|||

| Embedded / Hardware / Auxilliary Functions | ||||||||||||||||||||||||||||

| An attacker compromises the software or sends malicious commands to drain the robot battery. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 2 | 3 | 3 | 3 | 3 | 14 | ✘ | ✘ | ✓ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ |

|

Dessiatnikoff, Anthony, Yves Deswarte, Eric Alata, and Vincent Nicomette. “Potential Attacks on Onboard Aerospace Systems.” IEEE Security & Privacy 10, no. 4 (July 2012): 71–74. | ||

| Embedded / Hardware / Communications | ||||||||||||||||||||||||||||

| An attacker connects to an exposed debug port. | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | 3 | 3 | 3 | 3 | 3 | 15 | ✓ | ✓ | ✓ | ✘ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

|

|||

| An attacker connects to an internal communication bus. | ✓ | ✓ | ✘ | ▲ | ▲ | ▲ | 3 | 3 | 3 | 3 | 3 | 15 | ▲ | ▲ | ▲ | ✘ | ▲ | ▲ | ▲ | ▲ | ▲ | ▲ | ▲ | ▲ |

|

|||

| Remote / Client Application | ||||||||||||||||||||||||||||

| An attacker intercepts the user credentials on their desktop machine. | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | 2 | 2 | 2 | 3 | 1 | 10 | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ▲ | ✘ | ✓ | ✘ |

|

|||

| Remote / Cloud Integration | ||||||||||||||||||||||||||||

| An attacker intercepts cloud service credentials deployed on the robot. | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | 2 | 2 | 1 | 3 | 1 | 9 | ✘ | ▲ | ✘ | ✓ | ✘ | ▲ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ |

|

|||

| An attacker gains read access to robot cloud data. | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | 2 | 2 | 1 | 3 | 1 | 9 | ✘ | ✘ | ✘ | ✓ | ✘ | ▲ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ |

|

|||

| An attacker alters or deletes robot cloud data. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 2 | 2 | 1 | 3 | 1 | 9 | ✘ | ▲ | ✘ | ✓ | ✘ | ▲ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ |

|

|||

| Remote / Software Deployment | ||||||||||||||||||||||||||||

| An attacker spoofs the deployment service. | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 2 | 3 | 3 | 14 | ✘ | ✓ | ▲ | ✓ | ✓ | ▲ | ▲ | ✘ | ✘ | ✘ | ✘ | ✓ |

|

|||

| An attacker modifies the binaries sent by the deployment service. | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | 3 | 3 | 2 | 3 | 3 | 14 | ✘ | ✓ | ▲ | ✓ | ✓ | ▲ | ▲ | ✘ | ✘ | ✘ | ✘ | ✓ |

|

|||

| An attacker intercepts the binaries sent by the deployment service. | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | 1 | 3 | 2 | 3 | 3 | 12 | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘ | ✘ | ✓ |

|

|||

| An attacker prevents the robot and the deployment service from communicating. | ✘ | ✘ | ✘ | ✘ | ✓ | ✘ | 1 | 3 | 2 | 3 | 3 | 12 | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | ✓ | ||||

| Cross-Cutting Concerns / Credentials, PKI and Secrets | ||||||||||||||||||||||||||||

| An attacker compromises a Certificate Authority trusted by the robot. | ✓ | ✓ | ✘ | ✓ | ✘ | ✓ | 3 | 1 | 1 | 2 | 3 | 10 | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ||||

Including a new robot into the threat model

The following steps are recommended in order to extend this document with additional threat models:

- Determine the robot evaluation scenario.

This will include:

- System description and specifications

- Data assets

- Define the robot environment:

- External actors

- Entry points

- Use cases

- Design security boundary and architectural schemas for the robotic application.

- Evaluate and prioritize entry points

- Make use of the RSFrsf to find applicable weaknesses on the robot.

- Take existing documentation as help for finding applicable entry points.

- Evaluate existing threats based on general threat table and add new ones to the specific threat

table.

- Evaluate new threats with DREADwikipedia_dread and STRIDEwikipedia_stride methodologies.

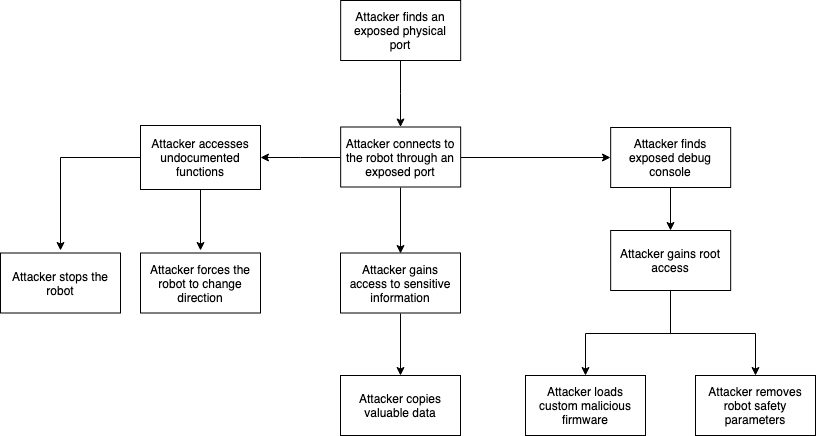

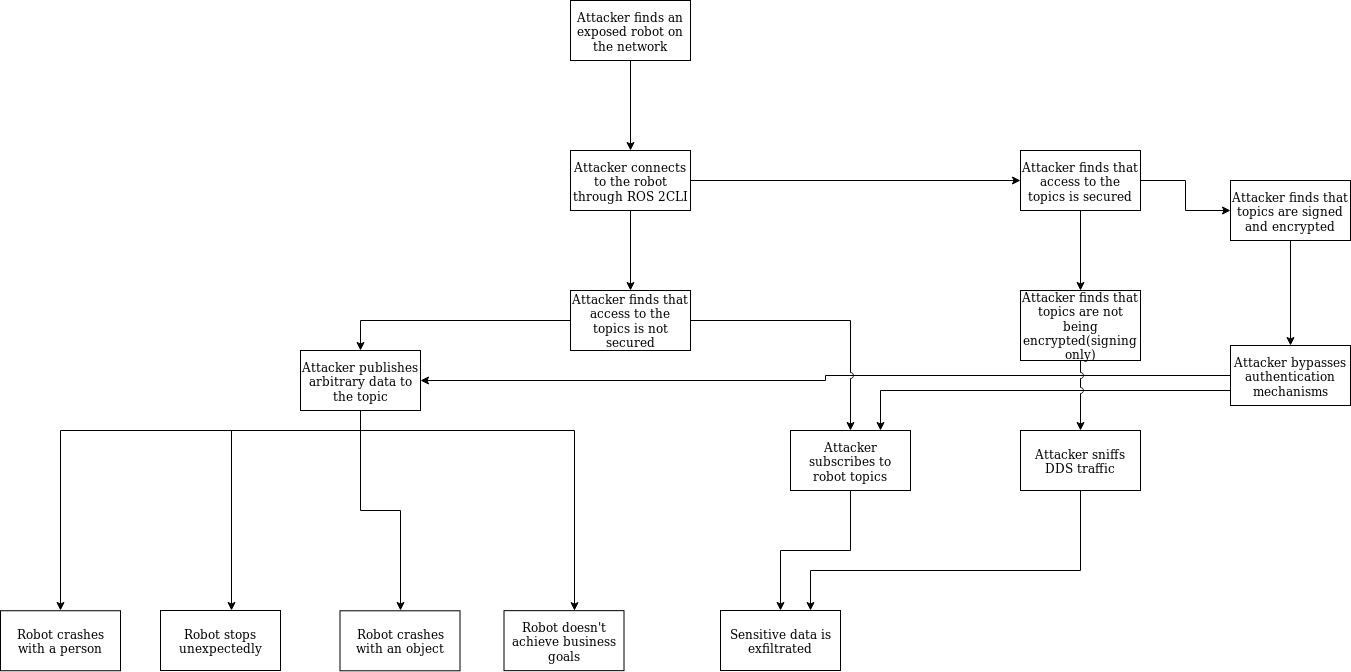

- Design hypothetical attack trees for each of the entry points, detailing the affected resources on the process.

- Create a Pull Request and submit the changes to the ros2/design repository.

Threat Analysis for the TurtleBot 3 Robotic Platform

System description

The application considered in this section is tele-operation of a Turtlebot 3 robot using an Xbox controller.

The robot considered in this section is a TurtleBot 3 Burger. It is a small educational robot embedding an IMU and a Lidar, two motors / wheels, and a chassis that hosts a battery, a Pi Raspberry Pi 3 Model B+ Single Board Computer, and a OpenCR 1.0 Arduino compatible board that interacts with the sensors. The robot computer runs two nodes:

turtlebot3_nodeforwarding sensor data and actuator control commands,raspicam2_nodewhich is forwarding camera data

We also make the assumption that the robot is running a ROS 2 port of the AWS CloudWatch sample application). A ROS 2 version of this component is not yet available, but it will help us demonstrate the threats related to connecting a robot to a cloud service.

For the purpose of demonstrating the threats associated with distributing a ROS graph among multiple hosts, an Xbox controller is connected to a secondary computer (“remote host”). The secondary computer runs two additional nodes:

joy_nodeis forwarding joystick input as ROS 2 messages,teleop_twist_joyis converting ROS 2 joystick messages to control commands.

Finally, the robot data is accessed by a test engineer through a “field testing” computer.

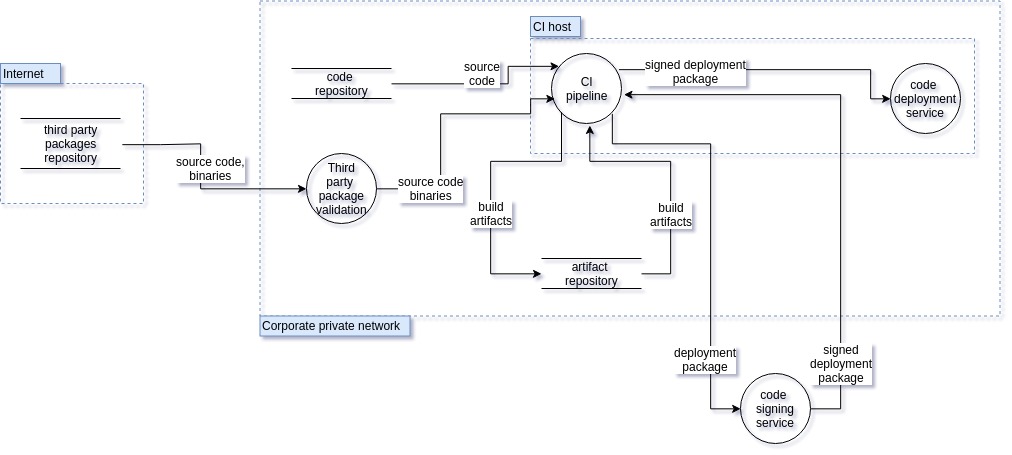

Architecture Dataflow diagram

Assets

Hardware

- TurtleBot 3 Burger is a small, ROS-enabled

robot for education purposes.

- Compute resources

- Raspberry PI 3 host: OS Raspbian Stretch with ROS 2 Crystal running natively (without Docker), running as root.

- OpenCR board: using ROS 2 firmware as described in the TurtleBot3 ROS 2 setup instructions.

- Hardware components) include:

- The Lidar is connected to the Raspberry PI through USB.

- A Raspberry PI camera module is connected to the Raspberry PI 3 through its Camera Serial Interface (CSI).

- Compute resources

- Field testing host: conventional laptop running OS Ubuntu 18.04, no ROS installed. Running as a sudoer user.

- Remote Host: any conventional server running OS Ubuntu 18.04 with ROS 2 Crystal.

-

CI host: any conventional server running OS Ubuntu 18.04.

- WLAN: a wifi local area network without security enabled, open for anyone to connect

- Corporate private network: a secure corporate wide-area network, that spans multiple cities. Only authenticated user with suitable credentials can connect to the network, and good security practices like password rotation are in place.

Processes

- Onboard TurtleBot3 Raspberry Pi

turtlebot3_node- CloudWatch nodes (hypothetical as it has not been yet ported to ROS 2)

cloudwatch_metrics_collector: subscribes to a the /metrics topic where other nodes publish MetricList messages that specify CloudWatch metrics data, and sends the corresponding metric data to CloudWatch metrics using the PutMetricsData API.cloudwatch_logger: subscribes to a configured list of topics, and publishes all messages found in that topic to a configured log group and log stream, using the CloudWatch metrics API.

- Monitoring Nodes

monitor_speed: subscribes to the topic /odom, and for each received odometry message, extract the linear and angular speed, build a MetricList message with those values, and publishes that message to data to the /metrics topic.health_metric_collector: collects system metrics (free RAM, total RAM, total CPU usage, per core CPU usage, uptime, number of processes) and publishes it as a MetricList message to the /metrics topic.

raspicam2_nodea node publishing Raspberry Pi Camera Module data to ROS 2.

- An XRCE Agent runs on the Raspberry, and is used by a DDS-XRCE client running on the OpenCR 1.0 board, that publishes IMU sensor data to ROS topics, and controls the wheels of the TurtleBot based on the teleoperation messages published as ROS topics. This channel uses serial communication.

- A Lidar driver process running on the Raspberry interfaces with the Lidar, and uses a DDS-XRCE client to publish data over UDP to an XRCE agent also running on the Raspberry Pi. The agent sends the sensor data to several ROS topics.

- An SSH client process is running in the field testing host, connecting to the Raspberry PI for diagnostic and debugging.

- A software update agent process is running on the Raspberry PI, OpenCR board, and navigation hosts. The agent polls for updates a code deployment service process running on the CI host, that responds with a list of packages and versions, and a new version of each package when an update is required.

- The CI pipeline process on the CI host is a Jenkins instance that is polling a code repository for new revisions of a number of ROS packages. Each time a new revision is detected it rebuilds all the affected packages, packs the binary artifacts into several deployment packages, and eventually sends the package updates to the update agent when polled.

Software Dependencies

- OS / Kernel

- Ubuntu 18.04

- Software

- ROS 2 Core Libraries

- ROS 2 Nodes:

joy_node,turtlebot3_node,rospicam2_node,teleop_twist_joy - ROS 2 system dependencies as defined by rosdep:

- RMW implementation: we assume the RMW implementation is

rmw_fastrtps. - The threat model describes attack with the security enabled or disabled. If the security is enabled, the security plugins are assumed to be configured and enabled.

- RMW implementation: we assume the RMW implementation is

See TurtleBot3 ROS 2 setup instructions for details about TurtleBot3 software dependencies.

External Actors

- A test engineer is testing the robot.

- A user operates the robot with the joystick.

- A business analyst periodically checks dashboards with performance information for the robot in the AWS Cloudwatch web console.

Robot Data Assets

- Topic Message

- Private Data

- Camera image messages

- Logging messages (might describe camera data)

- CloudWatch metrics and logs messages (could contain Intellectual

Property such as which algorithms are implemented in a particular

node).

- Restricted Data

- Robot Speed and Orientation. Some understanding of the current robot task may be reconstructed from those messages..

- Private Data

- AWS CloudWatch data

- Metrics, logs, and aggregated dashboard are all private data, as they are different serializations of the corresponding topics.

- Raspberry Pi System and ROS logs on Raspberry PI

- Private data just like CloudWatch logs.

- AWS credentials on all hosts

- Secret data, provide access to APIs and other compute assets on the AWS cloud.

- SSH credentials on all hosts

- Secret data, provide access to other hosts.

- Robot embedded algorithm (e.g.

teleop_twist_joy)- Secret data. Intellectual Property (IP) theft is a critical issue for nodes which are either easy to decompile or written in an interpreted language.

Robot Compute Assets

- AWS CloudWatch APIs and all other AWS resources accessible from the AWS credentials present in all the hosts.

- Robot Topics

/cmd_velcould be abused to damage the robot or hurt users.

Entry points

- Communication Channels

- DDS / ROS Topics

- Topics can be listened or written to by any actor:

- Connected to a network where DDS packets are routed to,

- Have necessary permissions (read / write) if SROS is enabled.

- When SROS is enabled, attackers may try to compromise the CA authority or the private keys to generate or intercept private keys as well as emitting malicious certificates to allow spoofing.

- USB connection is used for communication between Raspberry Pi and OpenCR board and LIDAR sensor.

- Topics can be listened or written to by any actor:

- SSH

- SSH access is possible to anyone on the same LAN or WAN (if port-forwarding is enabled). Many images are setup with a default username and password with administrative capabilities (e.g. sudoer).

- SSH access can be compromised by modifying the robot

- DDS / ROS Topics

- Deployed Software

- ROS nodes are compiled either by a third-party (OSRF build-farm) or by the application developer. It can be compiled directly on the robot or copied from a developer workstation using scp or rsync.

- An attacker compromising the build-farm or the developer workstation could introduce a vulnerability in a binary which would then be deployed to the robot.

- Data Store (local filesystem)

- Robot Data

- System and ROS logs are stored on the Raspberry Pi filesystem.

- Robot system is subject to physical attack (physically removing the disk from the robot to read its data).

- Remote Host Data

- Machines running additional ROS nodes will also contain log files.

- Remote hosts is subject to physical attack. Additionally, this host may not be as secured as the robot host.

- Cloud Data

- AWS CloudWatch data is accessible from public AWS API endpoints, if credentials are available for the corresponding AWS account.

- AWS CloudWatch can be credentials can allow attackers to access other cloud resources depending on how the account has been configured.

- Secret Management

- DDS / ROS Topics

- If SROS is enabled, private keys are stored on the local filesystem.

- SSH

- SSH credentials are stored on the robot filesystem.

- Private SSH keys are stored in any computer allowed to log into the robot where public/private keys are relied on for authentication purposes.

- AWS Credentials

- AWS credentials are stored on the robot file system.

- DDS / ROS Topics

- Robot Data

Use case scenarios

- Development, Testing and Validation

- An engineer develops code or runs tests on the robot.

They may:

- Restart the robot

- Restart the ROS graph

- Physically interact with the robot

- Log into the robot using SSH

- Check AWS console for metrics and log

- An engineer develops code or runs tests on the robot.

They may:

- End-User

- A user tele-operates the robot.

They may:

- Start the robot.

- Control the joystick.

- A business analyst may access AWS CloudWatch data on the console to assess the robot performance.

- A user tele-operates the robot.

They may:

Threat model

Each generic threat described in the previous section can be instantiated on the TurtleBot 3.

This table indicates which TurtleBot particular assets and entry points are impacted by each threat. A check sign (✓) means impacted while a cross sign (✘) means not impacted. The “SROS Enabled?” column explicitly states out whether using SROS would mitigate the threat or not. A check sign (✓) means that the threat could be exploited while SROS is enabled while a cross sign (✘) means that the threat requires SROS to be disabled to be applicable.

| Threat | TurtleBot Assets | Entry Points | SROS Enabled? | Attack | Mitigation | Mitigation Result (redesign / transfer / avoid / accept) | Additional Notes / Open Questions | |||

|---|---|---|---|---|---|---|---|---|---|---|

| Human Assets | Robot App. | DDS Topic | OSRF Build-farm | SSH | ||||||

| Embedded / Software / Communication / Inter-Component Communication | ||||||||||

| An attacker spoofs a component identity. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | Without SROS any node may have any name so spoofing is trivial. |

|

Risk is reduced if SROS is used. | Authentication codes need to be generated for every pair of participants rather than every participant sharing a single authentication code with all other participants. Refer to section 7.1.1.3 of the DDS Security standard. |

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | An attacker deploys a malicious node which is not enabling DDS Security Extension and spoofs the `joy_node` forcing the robot to stop. |

|

Risk is mitigated. |

|

|

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | An attacker steals node credentials and spoofs the

joy_node forcing the robot to stop. |

|

Mitigation risk requires additional work. |

|

|

| An attacker intercepts and alters a message. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | Without SROS an attacker can modify /cmd_vel messages sent

through a network connection to e.g. stop the robot. |

|

Risk is reduced if SROS is used. | Additional hardening could be implemented by forcing part of the TurtleBot topic to only be communicated over shared memory. |

| An attacker writes to a communication channel without authorization. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | Without SROS, any node can publish to any topic. |

|

Risk is mitigated. | |

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | An attacker obtains credentials and publishes messages to

/cmd_vel |

|

Transfer risk to user configuring permissions.xml | permissions.xml should ideally be generated as writing it

manually is cumbersome and error-prone. |

|

| An attacker listens to a communication channel without authorization. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | Without SROS: any node can listen to any topic. |

|

Risk is mitigated if SROS is used. | |

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | DDS participants are enumerated and fingerprinted to look for potential vulnerabilities. |

|

Risk is mitigated if DDS-Security is configured appropriately. | ||

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | TurtleBot camera images are saved to a remote location controlled by the attacker. |

|

Risk is mitigated if DDS-Security is configured appropriately. | ||

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | TurtleBot LIDAR measurements are saved to a remote location controlled by the attacker. | Local communication using XRCE should be done over the loopback interface. | This doesn't protect the serial communication from the LIDAR sensor to the Raspberry Pi. | ||

| An attacker prevents a communication channel from being usable. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | Without SROS: any node can ""spam"" any other component. |

|

Risk may be reduced when using SROS. | |

| ✓ | ✓ | ✓ | ✘ | ✘ | ✓ | A node can ""spam"" another node it is allowed to communicate with. |

|

Mitigating risk requires additional work. | How to enforce when nodes are malicious? Observe and kill? | |

| Embedded / Software / Communication / Long-Range Communication (e.g. WiFi, Cellular Connection) | ||||||||||

| An attacker hijacks robot long-range communication | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | An attacker connects to the same unprotected WiFi network than a TurtleBot. |

|

Risk is reduced for DDS if SROS is used. Other protocols may still be vulnerable. | Enforcing communication though a VPN could be an idea (see PR2 manual for a reference implementation) or only DDS communication could be allowed on long-range links (SSH could be replaced by e.g. adbd and be only available using a dedicated short-range link). |

| An attacker intercepts robot long-range communications (e.g. MitM) | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | An attacker connects to the same unprotected WiFi network than a TurtleBot. |

|

Risk is reduced for DDS if SROS is used. Other protocols may still be vulnerable. | |

| An attacker disrupts (e.g. jams) robot long-range communication channels. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | Jam the WiFi network a TurtleBot is connected to. | If network connectivity is lost, switch to cellular network. | Mitigating is impossible on TurtleBot (no secondary long-range communication system). | |

| Embedded / Software / Communication / Short-Range Communication (e.g. Bluetooth) | ||||||||||

| An attacker executes arbitrary code using a short-range communication protocol vulnerability. | ✓ | ✓ | ✓ | ✘ | ✓ | ✘/✓ | Attacker runs a blueborne attack to execute arbitraty code on the TurtleBot Raspberry Pi. | A potential mitigation may be to build a minimal kernel with e.g. Yocto which does not enable features the robot does not use. In this particular case, the TurtleBot does not require Bluetooth but OS images enable it by default. | ||

| Embedded / Software / OS & Kernel | ||||||||||

| An attacker compromises the real-time clock to disrupt the kernel RT scheduling guarantees. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | A malicious node attempts to write a compromised kernel to

/boot |

TurtleBot / Zymbit Key integration will mostly mitigate this threat. | Some level of mitigation will be possible through Turtlebot / Zymkey integration. SecureBoot support would probably be needed to completely mitigate this threat. | |

| ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | A malicious actor sends incorrect NTP packages to enable other attacks (allow the use of expired certificates) or to prevent the robot software from behaving properly (time between sensor readings could be miscomputed). |

|

TurtleBot / Zymbit Key integration and following best practices for NTP configuration will mostly mitigate this threat. | ||

| An attacker compromises the OS or kernel to alter robot data. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | A malicious node attempts to write a compromised kernel to

/boot |

TurtleBot / Zymbit Key integration will mostly mitigate this threat. | ||

| An attacker compromises the OS or kernel to eavesdrop on robot data. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | A malicious node attempts to write a compromised kernel to

/boot |

TurtleBot / Zymbit Key integration will mostly mitigate this threat. | ||

| An attacker gains access to the robot OS through its administration interface. | ✓ | ✓ | ✘ | ✘ | ✓ | ✘/✓ | An attacker connects to the TurtleBot using Raspbian default username and password (pi / raspberry pi) |

|

Demonstrating a mitigation would require significant work. Building out an image using Yocto w/o a default password could be a way forward. | SSH is significantly increasing the attack surface. Adbd may be a better solution to handle administrative access. |

| ✓ | ✓ | ✘ | ✘ | ✓ | ✘/✓ | An attacker intercepts SSH credentials. |

|

Demonstrating a mitigation based on replacing sshd by adbd would require significant work. | ||

| Embedded / Software / Component-Oriented Architecture | ||||||||||

| A node accidentally writes incorrect data to a communication channel. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘/✓ | TurtleBot joy_node could accidentally publish large or

randomized joystick values. |

|

Mitigation would require significant amount of engineering. | This task would require designing and implementing a new system from scratch in ROS 2 to document node interactions. |

| An attacker deploys a malicious node on the robot. | ✓ | ✓ | ✘ | ✓ | ✓ | ✘/✓ | TurtleBot is running a malicious joy_node.

This node steals robot credentials try to kill other processes fill the disk with random

data. |

|

It is possible to mitigate this attack on the TurtleBot by creating multiple user accounts. | While the one-off solution involving creating user accounts manually is interesting, it would be better to generalize this through some kind of mechanism (e.g. AppArmor / SecComp support for roslaunch) |

| ✓ | ✓ | ✘ | ✓ | ✓ | ✘/✓ | TurtleBot joy_node repository is compromised by an

attacker and malicious code is added to the repository.

joy_node is released again and the target TurtleBot is

compromised when the node gets updated. |

|

Risk is mitigated by enforcing processes around source control but the end-to-end story w.r.t deployment is still unclear. | Mitigation of this threat is also detailed in the diagram below this table. | |

| An attacker can prevent a component running on the robot from executing normally. | ✓ | ✓ | ✘ | ✘ | ✓ | ✘/✓ | A malicious TurtleBot joy_node is randomly killing other nodes to disrupt the robot behavior. |

|

Risk is mitigated for the TurtleBot platform. This approach is unlikely to scale with robots whose nodes cannot be restarted at any time safely though. | Systemd should be investigated as another way to handle and/or run ROS nodes. |

| Embedded / Software / Configuration Management | ||||||||||

| An attacker modifies configuration values without authorization. | ✓ | ✓ | ✘ | ✓ | ✘ | Node parameters are freely modifiable by any DDS domain participant. | ||||

| An attacker access configuration values without authorization. | ✓ | ✓ | ✘ | ✓ | ✘ | Node parameters values can be read by any DDS domain participant. | ||||

| A user accidentally misconfigures the robot. | ✓ | ✓ | ✘ | ✘ | ✘ | Node parameters values can be modified by anyone to any value. | ||||

| Embedded / Software / Data Storage (File System) | ||||||||||

| An attacker modifies the robot file system by physically accessing it. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | TurtleBot SD card is physically accessed and a malicious daemon in installed. | Use a TPM ZymKey to encrypt the filesystem (using dm-crypt / LUKS) | TurtleBot / Zymbit Key integration will mitigate this threat. | |

| An attacker eavesdrops on the robot file system by physically accessing it. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | TurtleBot SD card is cloned to steal robot logs, credentials, etc. | Use a TPM ZymKey to encrypt the filesystem (using dm-crypt / LUKS) | TurtleBot / Zymbit Key integration will mitigate this threat. | |

| An attacker saturates the robot disk with data. | ✓ | ✓ | ✘ | ✓ | ✘ | ✘/✓ | TurtleBot is running a malicious joy_node.

This node steals robot credentials try to kill other processes fill the disk with random

data. |

|

A separate user account with disk quota enabled may be a solution but this needs to be demonstrated. | |

| Embedded / Software / Logs | ||||||||||

| An attacker exfiltrates log data to a remote server. | ✘ | ✘ | ✘ | ✓ | ✘ | ✘/✓ | TurtleBot logs are exfiltered by a malicious joy_node. |

|

Risk is mitigated by limiting access to logs. However, how to run handle logs once stored requires more work. Ensuring no sensitive data is logged is also a challenge. | Production nodes logs should be scanned to detect leakage of sensitive information. |

| Embedded / Hardware / Sensors | ||||||||||

| An attacker spoofs a robot sensor (by e.g. replacing the sensor itself or manipulating the bus). | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | The USB connection of the TurtleBot laser scanner is modified to be turned off under some conditions. | |||

| Embedded / Hardware / Actuators | ||||||||||

| An attacker spoofs a robot actuator. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | TurtleBot connection to the OpenCR board is intercepted motor control commands are altered. | Enclose OpenCR board and Raspberry Pi with a case and use ZimKey perimeter breach protection. | Robot can be rendered inoperable if perimeter is breached. | |

| An attacker modifies the command sent to the robot actuators. (intercept & retransmit) | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | TurtleBot connection to the OpenCR board is intercepted, motor control commands are altered. | Enclose OpenCR board and Raspberry Pi with a case and use ZimKey perimeter breach protection. | Robot can be rendered inoperable if perimeter is breached. | |

| An attacker intercepts the robot actuators command (can recompute localization). | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | TurtleBot connection to the OpenCR board is intercepted motor control commands are logged. | Enclose OpenCR board and Raspberry Pi with a case and use ZimKey perimeter breach protection. | Robot can be rendered inoperable if perimeter is breached. | |

| An attacker sends malicious command to actuators to trigger the E-Stop | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | ||||

| Embedded / Hardware / Ancillary Functions | ||||||||||

| An attacker compromises the software or sends malicious commands to drain the robot battery. | ✓ | ✓ | ✘ | ✘ | ✘ | ✘/✓ | ||||

| Remote / Client Application | ||||||||||

| An attacker intercepts the user credentials on their desktop machine. | ✓ | ✓ | ✓ | ✘ | ✓ | ✓ | A roboticist connects to the robot with Rviz from their development machine. Another user with root privileges steals the credentials. |

|

||

| Remote / Cloud Integration | ||||||||||

| An attacker intercepts cloud service credentials deployed on the robot. | ✓ | ✓ | ✓ | ✘ | ✓ | ✓ | TurtleBot CloudWatch node credentials are extracted from the filesystem through physical access. |

|

||

| An attacker alters or deletes robot cloud data. | ✓ | ✓ | ✓ | ✘ | ✓ | ✓ | TurtleBot CloudWatch cloud data is accessed by an unauthorized user. | |||

| An attacker alters or deletes robot cloud data. | ✓ | ✓ | ✘ | ✘ | ✘ | ✓ | TurtleBot CloudWatch data is deleted by an attacker. |

|

||

| Remote / Software Deployment | ||||||||||

| An attacker spoofs the deployment service. | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| An attacker modifies the binaries sent by the deployment service. | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| An attacker intercepts the binaries sent by the depoyment service. | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| An attacker prevents the robot and the deployment service from communicating. | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

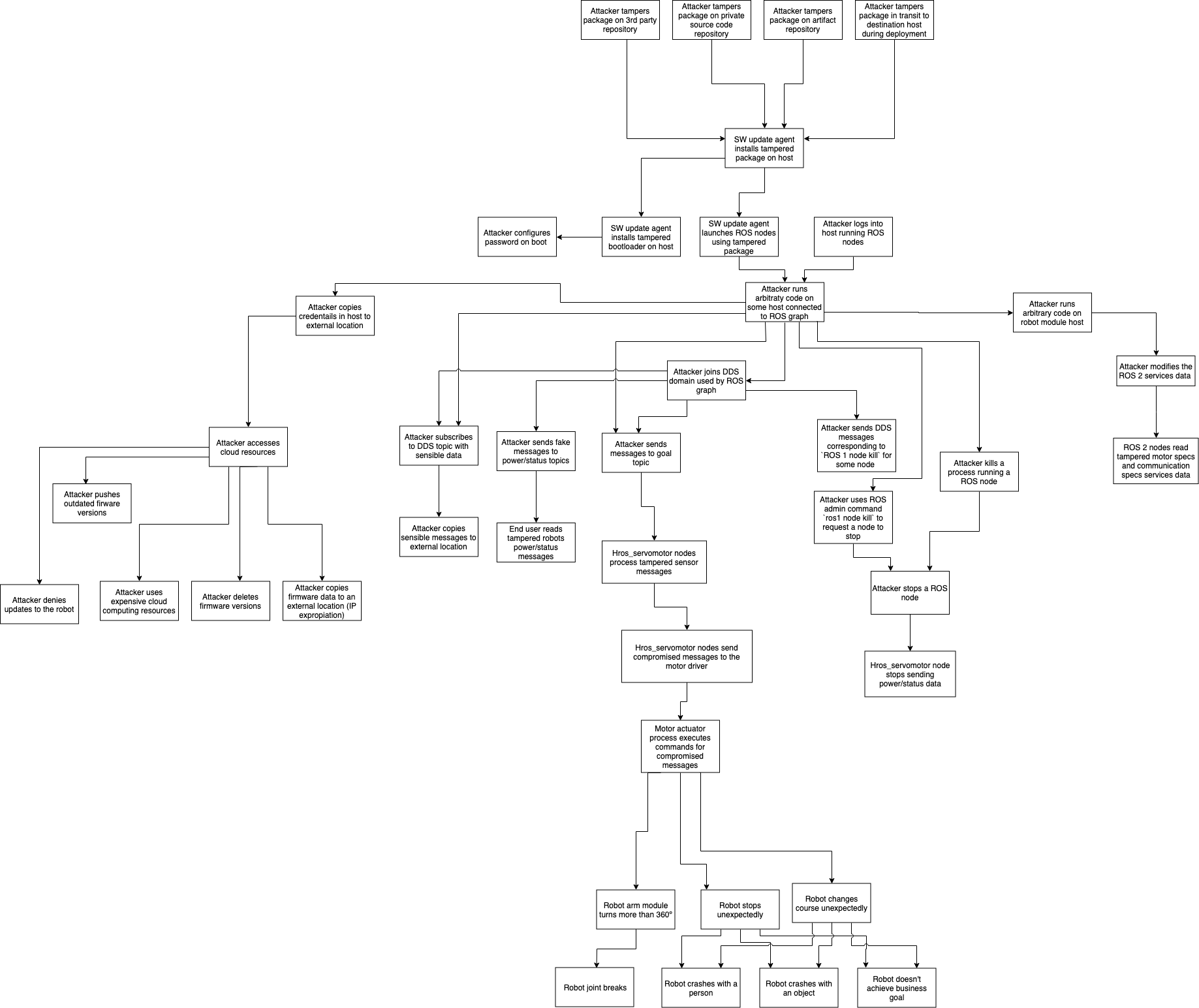

Threat Diagram: An attacker deploys a malicious node on the robot

This diagram details how code is built and deployed for the “An attacker deploys a malicious node on the robot” attack.

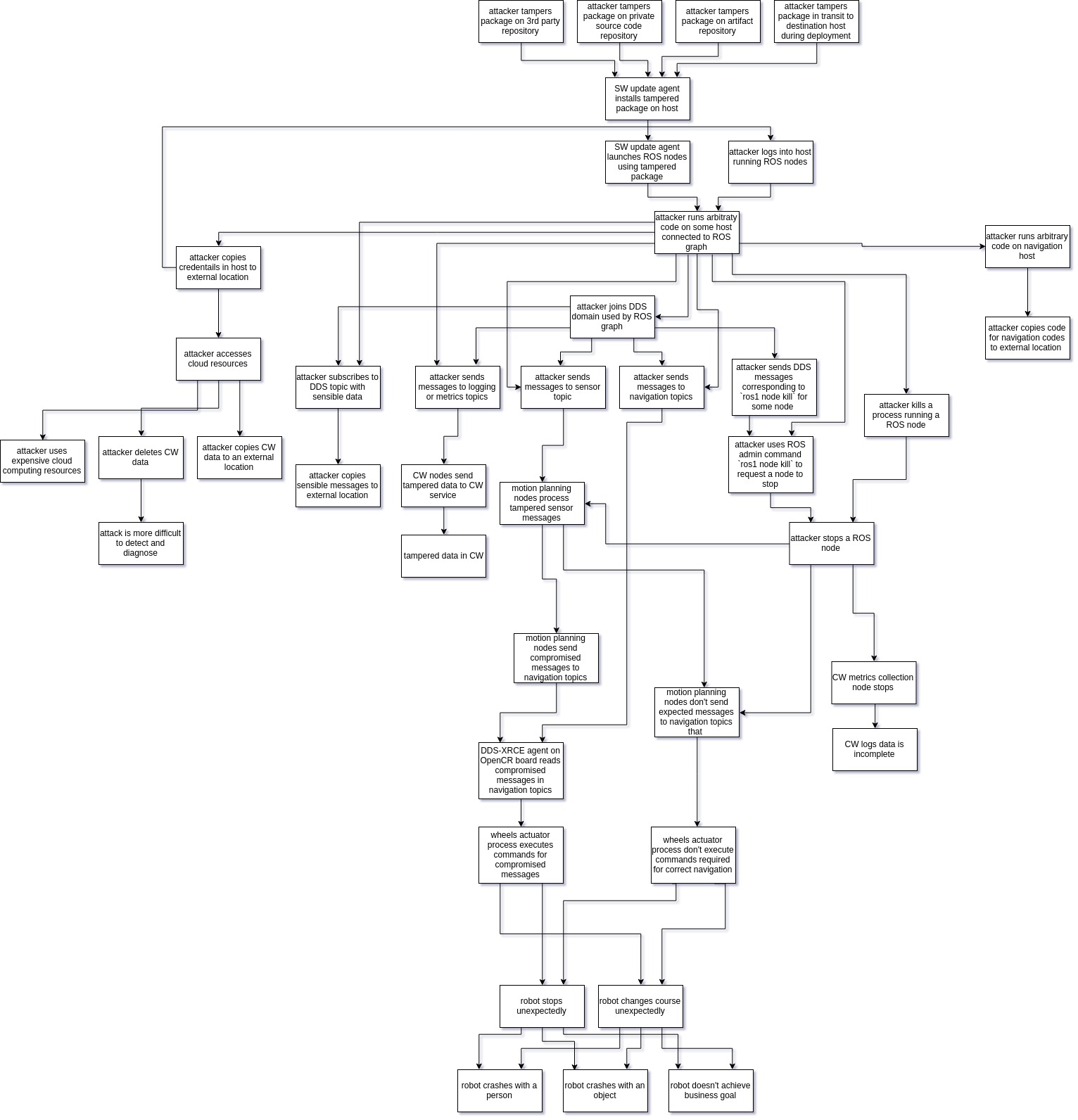

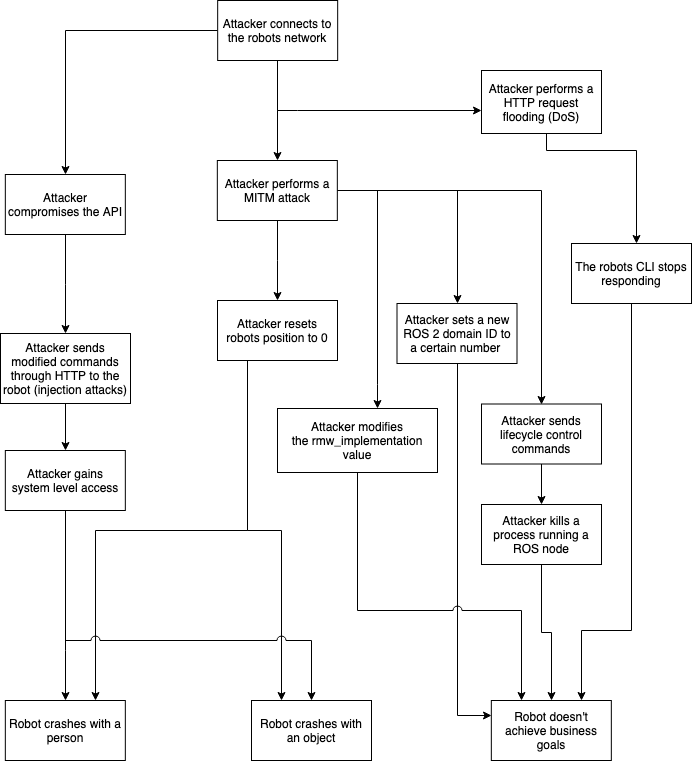

Attack Tree

Threats can be organized in the following attack tree.

Threat Model Validation Strategy

Validating this threat model end-to-end is a long-term effort meant to be distributed among the whole ROS 2 community. The threats list is made to evolve with this document and additional reference may be introduced in the future.

- Setup a TurtleBot with the exact software described in the TurtleBot3 section of this document.

- Penetration Testing

- Attacks described in the spreadsheet should be implemented.

For instance, a malicious

joy_nodecould be implemented to try to disrupt the robot operations. - Once the vulnerability has been exploited, the exploit should be released to the community so that the results can be reproduced.

- Whether the attack has been successful or not, this document should be updated accordingly.

- If the attack was successful, a mitigation strategy should be implemented. It can either be done through improving ROS 2 core packages or it can be a platform-specific solution. In the second case, the mitigation will serve as an example to publish best practices for the development of secure robotic applications.

- Attacks described in the spreadsheet should be implemented.

For instance, a malicious

Over time, new reference platforms may be introduced in addition to the TurtleBot 3 to define new attacks and allow other types of mitigations to be evaluated.

Threat Analysis for the MARA Robotic Platform

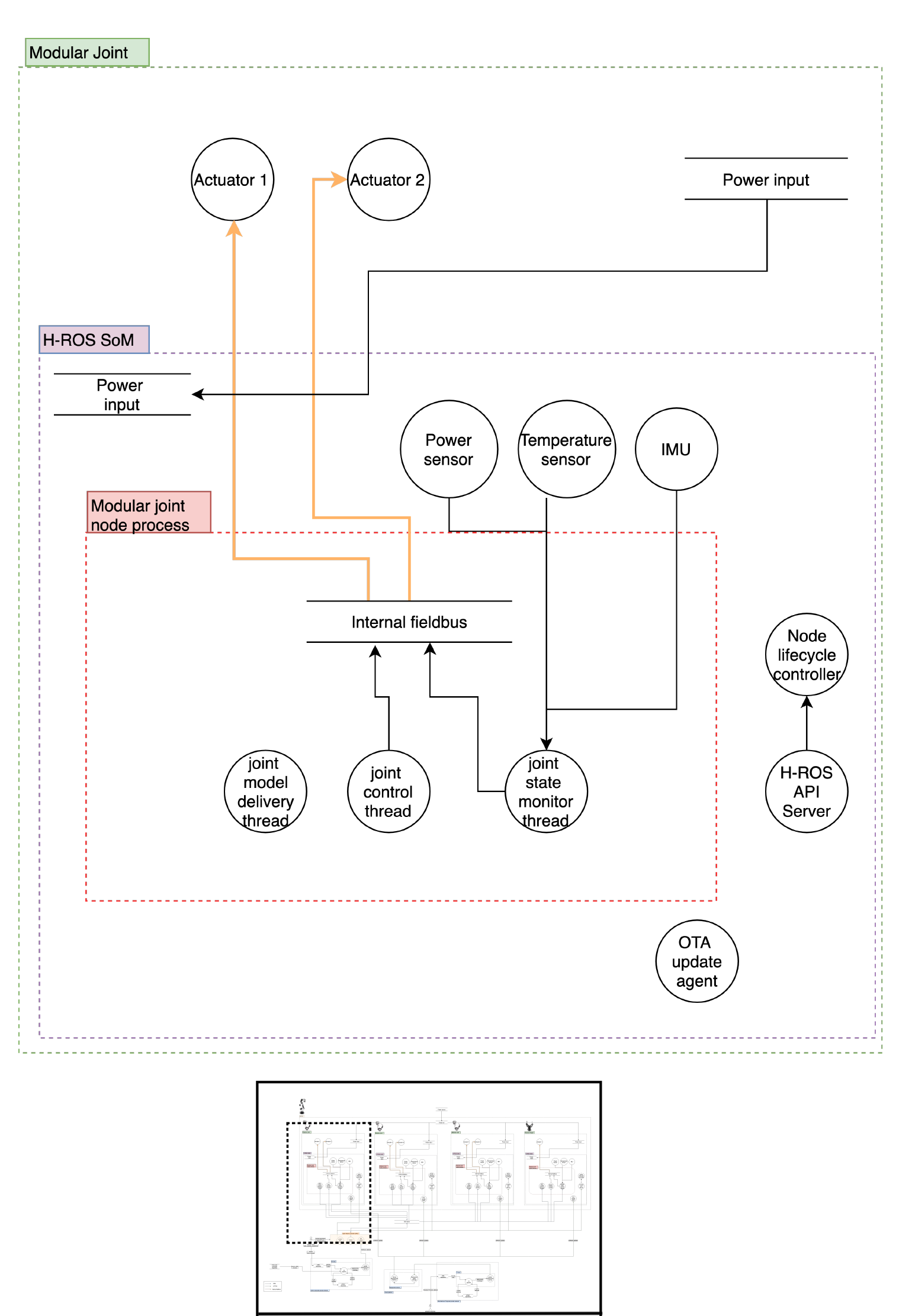

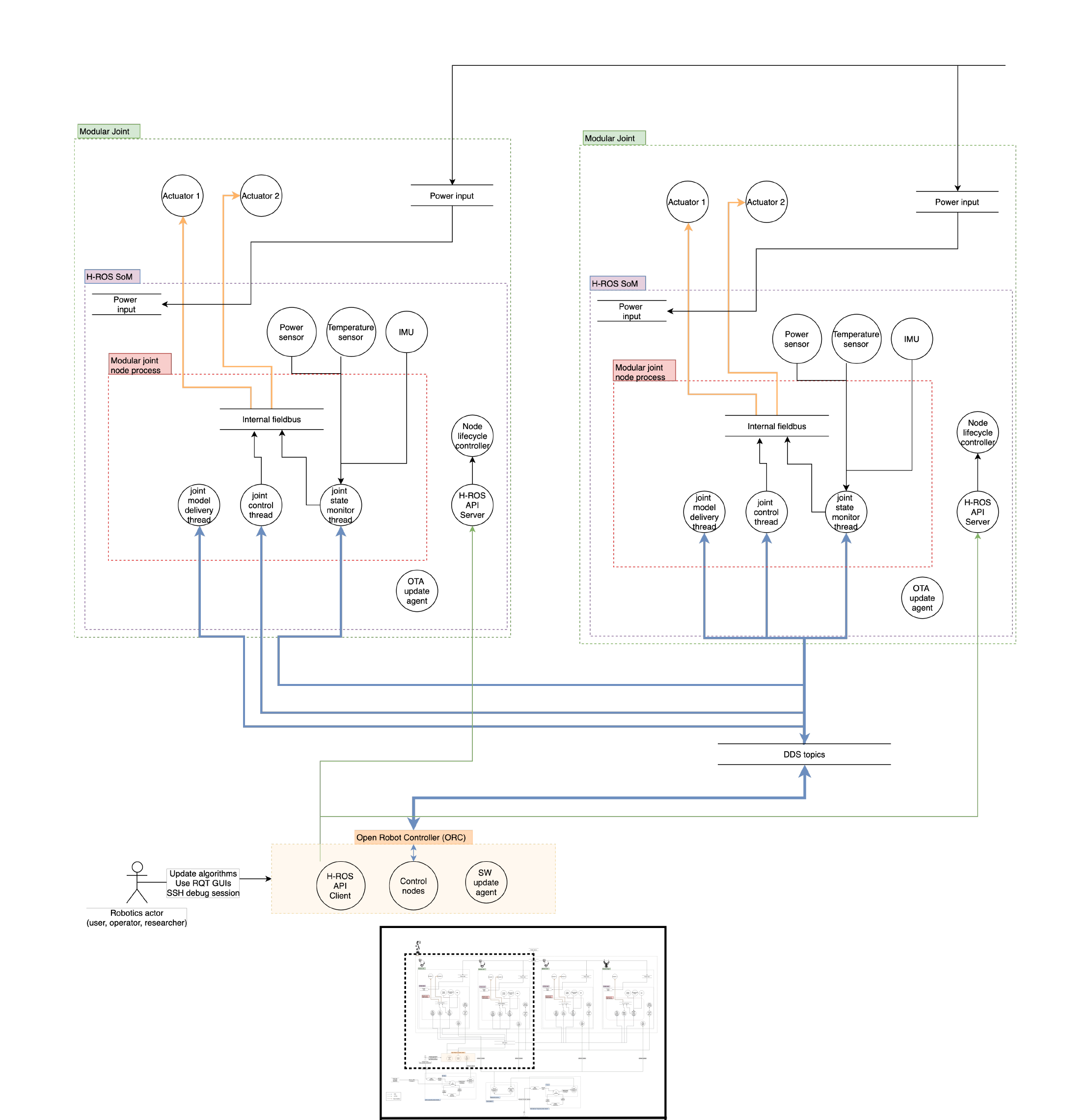

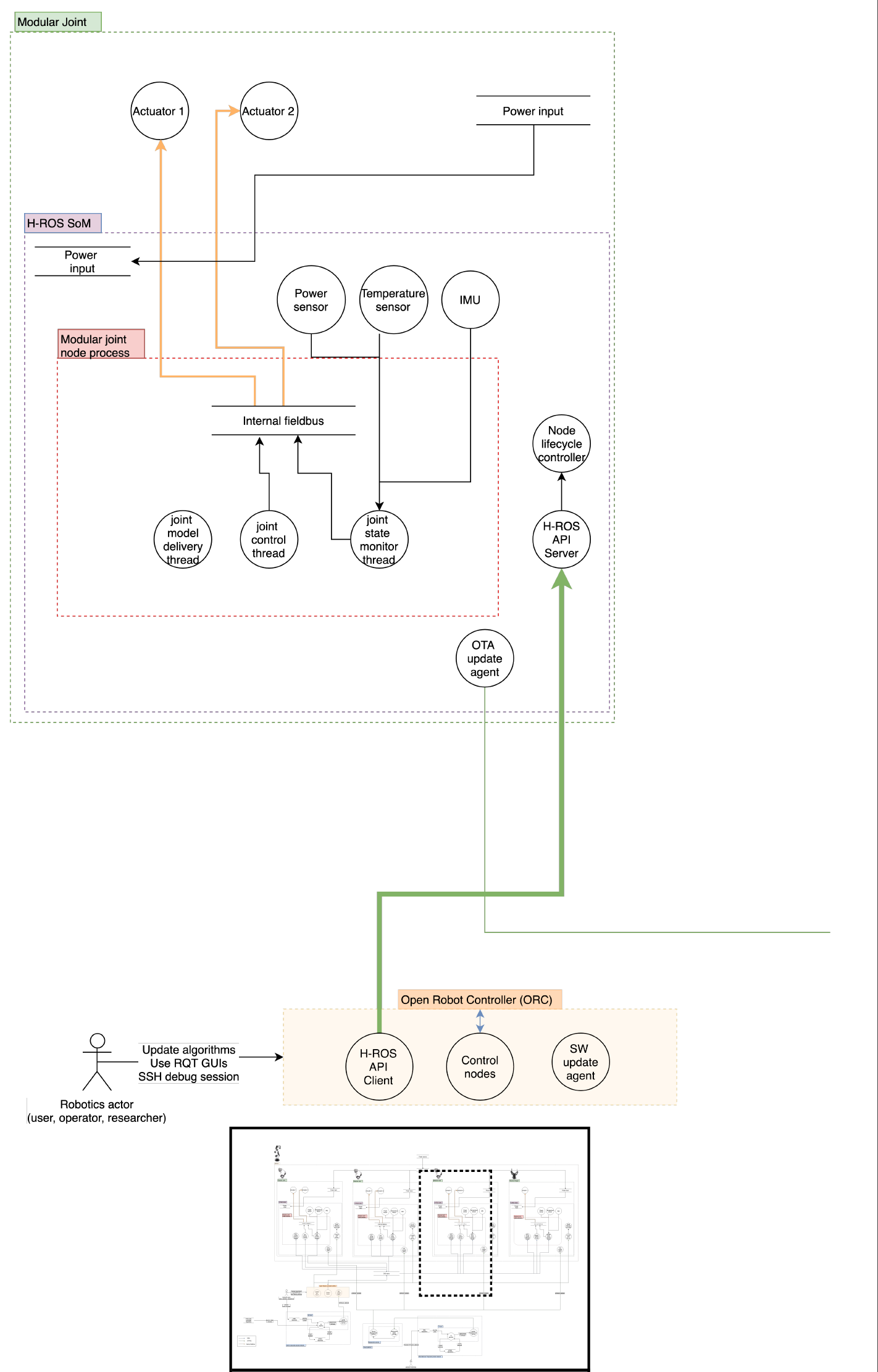

System description

The application considered in this section is a MARA modular robot operating on an industrial environment while performing a pick & place activity. MARA is the first robot to run ROS 2 natively. It is an industrial-grade collaborative robotic arm which runs ROS 2 on each joint, end-effector, external sensor or even on its industrial controller. Throughout the H-ROS communication bus, MARA is empowered with new possibilities in the professional landscape of robotics. It offers millisecond-level distributed bounded latencies for usual payloads and submicrosecond-level synchronization capabilities across ROS 2 components.

Built out of individual modules that natively run on ROS 2, MARA can be physically extended in a seamless manner. However, this also moves towards more networked robots and production environments which brings new challenges, especially in terms of security and safety.

The robot considered is a MARA, a 6 Degrees of Freedom (6DoF) modular and collaborative robotic arm with 3 kg of payload and repeatibility below 0.1 mm. The robot can reach angular speeds up to 90º/second and has a reach of 656 mm. The robot contains a variety of sensors on each joint and can be controlled from any industrial controller that supports ROS 2 and uses the HRIM information model. Each of the modules contains the H-ROS communication bus for robots enabled by the H-ROS SoM, which delivers real-time, security and safety capabilities for ROS 2 at the module level.

No information is provided about how ROS 2 nodes are distributed on each module. Each joint offers the following ROS 2 API capabilities as described in their documentation (MARA joint):

- Topics

GoalRotaryServo.msgmodel allows to control the position, velocity or/and effort (generated from models/actuator/servo/topics/goal.xml, see HRIM for more).StateRotaryServo.msgpublishes the status of the motor.Power.msgpublishes the power consumption.Status.msginforms about the resources that are consumed by the H-ROS SoM,StateCommunication.msgis created to inform about the state of the device network.

- Services

ID.srvpublishes the general identity of the component.Simulation3D.srvandSimulationURDF.srvsend the URDF and the 3D model of the modular component.SpecsCommunication.srvis a HRIM service which reports the specs of the device network.SpecsRotaryServo.srvis a HRIM message which reports the main features of the device.EnableDisable.srvdisables or enables the servo motor.ClearFault.srvsends a request to clear any fault in the servo motor.Stop.srvrequests to stop any ongoing movement.OpenCloseBrake.srvopens or closes the servo motor brake.

- Actions:

GoalJointTrajectoryallows to move the joint using a trajectory msg.

Such API gets translated into the following abstractions:

| Topic | Name |

|---|---|

| goal | /hrim_actuation_servomotor_XXXXXXXXXXXX/goal_axis1 |

| goal | /hrim_actuation_servomotor_XXXXXXXXXXXX/goal_axis2 |

| state | /hrim_actuation_servomotor_XXXXXXXXXXXX/state_axis1 |

| state | /hrim_actuation_servomotor_XXXXXXXXXXXX/state_axis2 |

| Service | Name |

|---|---|

| specs | /hrim_actuation_servomotor_XXXXXXXXXXXX/specs |

| enable servo | /hrim_actuation_servomotor_XXXXXXXXXXXX/enable |

| disable servo | /hrim_actuation_servomotor_XXXXXXXXXXXX/disable |

| clear fault | /hrim_actuation_servomotor_XXXXXXXXXXXX/clear_fault |

| stop | /hrim_actuation_servomotor_XXXXXXXXXXXX/stop_axis1 |

| stop | /hrim_actuation_servomotor_XXXXXXXXXXXX/stop_axis2 |

| close brake | /hrim_actuation_servomotor_XXXXXXXXXXXX/close_brake_axis1 |

| close brake | /hrim_actuation_servomotor_XXXXXXXXXXXX/close_brake_axis2 |

| open brake | /hrim_actuation_servomotor_XXXXXXXXXXXX/open_brake_axis1 |

| open brake | /hrim_actuation_servomotor_XXXXXXXXXXXX/open_brake_axis2 |

| Action | Name |

|---|---|

| goal joint trajectory | /hrim_actuation_servomotor_XXXXXXXXXXXX/trajectory_axis1 |

| goal joint trajectory | /hrim_actuation_servomotor_XXXXXXXXXXXX/trajectory_axis2 |

| Parameters | Name |

|---|---|

| ecat_interface | Ethercat interface |

| reduction_ratio | Factor to calculate the position of the motor |

| position_factor | Factor to calculate the position of the motor |

| torque_factor | Factor to calculate the torque of the motor |

| count_zeros_axis1 | Axis 1 absolute value of the encoder for the zero position |

| count_zeros_axis2 | Axis 2 absolute value of the encoder for the zero position |

| enable_logging | Enable/Disable logging |

| axis1_min_position | Axis 1 minimum position in radians |

| axis1_max_position | Axis 1 maximum position in radians |

| axis1_max_velocity | Axis 1 maximum velocity in radians/s |

| axis1_max_acceleration | Axis 1 maximum acceleration in radians/s^2 |

| axis2_min_position | Axis 2 minimum position in radians |

| axis2_max_position | Axis 2 maximum position in radians |

| axis2_max_velocity | Axis 2 maximum velocity in radians/s |

| axis2_max_acceleration | Axis 2 maximum acceleration in radians/s^2 |

The controller used in the application is a ROS 2-enabled industrial PC, specifically, an ORC. This PC also features the H-ROS communication bus for robots which commands MARA in a deterministic manner. This controller makes direct use of the aforementioned communication abstractions (topics, services and actions).

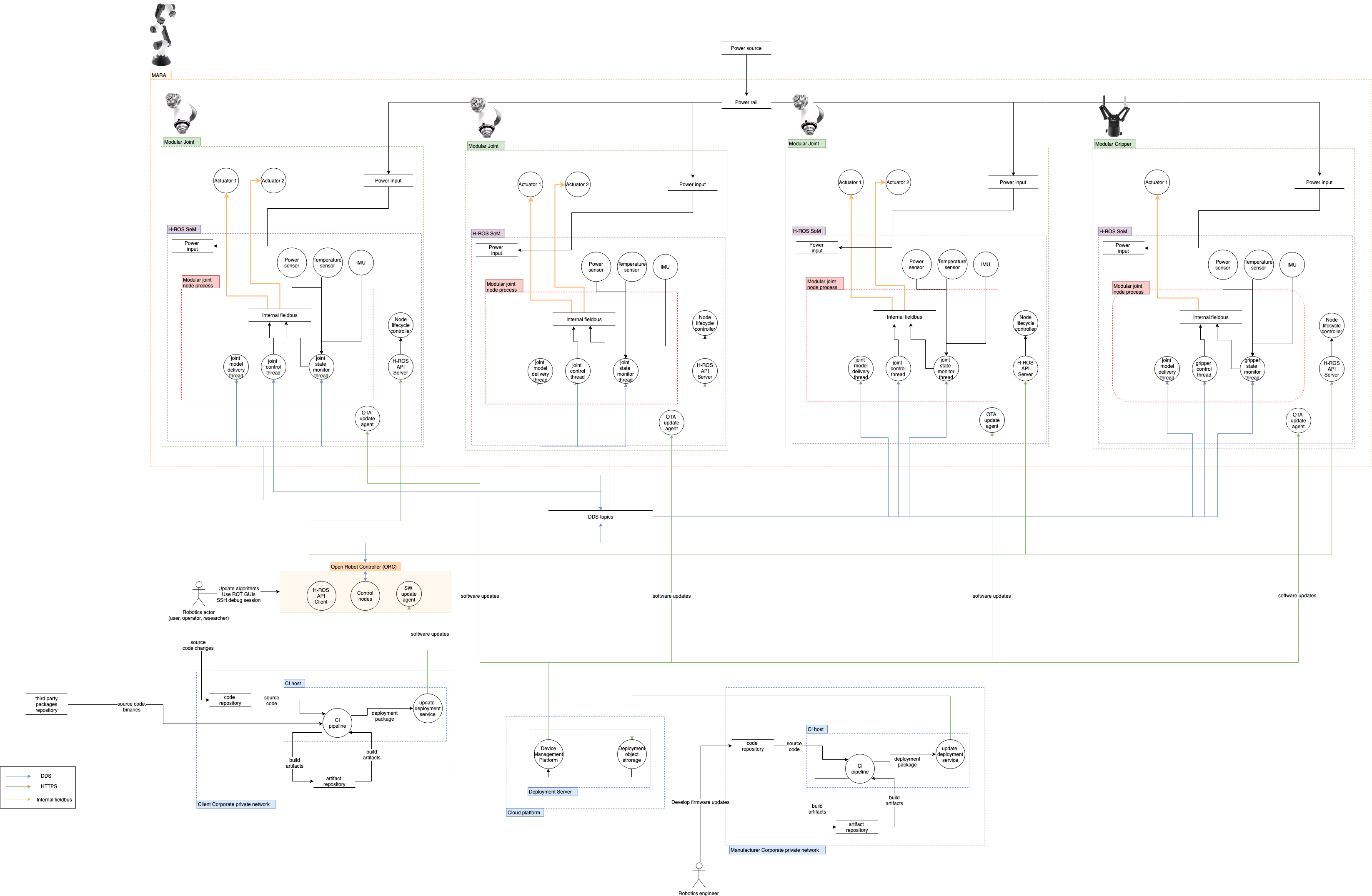

Architecture Dataflow diagram

Assets

This section aims for describing the components and specifications within the MARA robot environment. Below the different aspects of the robot are listed and detailed in Hardware, Software and Network. The external actors and Data assests are described independently.

Hardware

- 1x MARA modular robot is a modular manipulator, ROS 2 enabled robot for

industrial automation purposes.

- Robot modules

- 3 x Han’s Robot Modular Joints: 2 DoF electrical motors that include

precise encoders and an electro-mechanical breaking system for safety purposes.

Available in a variety of different torque and size combinations, going from 2.8 kg to 17 kg

weight and from 9.4 Nm to 156 Nm rated torque.

- Mechanical connector: H-ROS connector A

- Power input: 48 Vdc

- Communication: H-ROS robot communication bus

- Link layer: 2 x Gigabit (1 Gbps) TSN Ethernet network interface

- Middleware: Data Distribution Service (DDS)

- On-board computation: Dual core ARM® Cortex-A9

- Operating System: Real-Time Linux

- ROS 2 version: Crystal Clemmys

- Information model: HRIM Coliza

- Security:

- DDS crypto, authentication and access control plugins

- 1 x Robotiq Modular Grippers: ROS 2 enabled industrial end-of-arm-tooling.

- Mechanical connector: H-ROS connector A

- Power input: 48 Vdc

- Communication: H-ROS robot communication bus

- Link layer: 2 x Gigabit (1 Gbps) TSN Ethernet network interface

- Middleware: Data Distribution Service (DDS)

- On-board computation: Dual core ARM® Cortex-A9

- Operating System: Real-Time Linux

- ROS 2 version: Crystal Clemmys

- Information model: HRIM Coliza

- Security:

- DDS crypto, authentication and access control plugins

- 3 x Han’s Robot Modular Joints: 2 DoF electrical motors that include

precise encoders and an electro-mechanical breaking system for safety purposes.

Available in a variety of different torque and size combinations, going from 2.8 kg to 17 kg

weight and from 9.4 Nm to 156 Nm rated torque.

- Robot modules

- 1 x Industrial PC: ORC include:

- CPU: Intel i7 @ 3.70GHz (6 cores)

- RAM: Physical 16 GB DDR4 2133 MHz

- Storage: 256 GB M.2 SSD interface PCIExpress 3.0

- Communication: H-ROS robot communication bus

- Link layer: 2 x Gigabit (1 Gbps) TSN Ethernet network interface

- Middleware: Data Distribution Service (DDS)

- Operating System: Real-Time Linux

- ROS 2 version: Crystal Clemmys

- Information model: HRIM Coliza

- Security:

- DDS crypto, authentication and access control plugins

- 1 x Update Server: OTA

- Virtual Machine running on AWS EC2

- Operating System: Ubuntu 18.04.2 LTS

- Software: Mender OTA server

- Virtual Machine running on AWS EC2

Network

- Ethernet time sensitive (TSN) internal network: Interconnection of modular joints in the MARA Robot is performed over a daisy chained Ethernet TSN channel. Each module acts as a switch, forming the internal network of the robot.

- Manufacturer (Acutronic Robotics) corporate private network: A secure corporate wide-area network, that spans multiple cities. Only authenticated user with suitable credentials can connect to the network, and good security practices like password rotation are in place. This network is used to develop and deploy updates in the robotic systems. Managed by the original manufacturer.

- End-user corporate private network: A secure corporate wide-area network, that spans multiple cities. Only authenticated user with suitable credentials can connect to the network, and good security practices like password rotation are in place.

- Cloud network: A VPC network residing in a public cloud platform, containing multiple

servers.

The network follows good security practices, like implementation of security applicances,

user password rotation and multi-factor authentication.

Only allowed users can access the network.

The OTA service is open to the internet.

Uses of the cloud network:

- Manufacturer (Acutronic Robotics) uploads OTA artifacts from their

Manufacturer corporate private networkto the Cloud Network. - Robotic Systems on the

End-user corporate private networkfetch those artifacts from the Cloud Network. - Robotic Systems on the

End-user corporate private networksend telemetry data for predictive maintenance to Cloud Network.

- Manufacturer (Acutronic Robotics) uploads OTA artifacts from their

Software processes

In this section all the processes running on the robotic system in scope are detailed.

- Onboard Modular Joints

hros_servomotor_hans_lifecyle_nodethis node is in charge of controlling the motors inside the H-ROS SoM. This nodes exposes some services, actions and topics described below. This node publishes joint states, joint velocities and joint efforts. It provides a topic for servoing the modular joint and an action that will be waiting for a trajectory.hros_servomotor_hans_generic_nodea node which contains several generic services and topics with information about the modular joint, such aspowermeasurement readings: voltage and current,statusabout the H-ROS SoM like cpu load or network stats, specifications aboutcommunicationandcpu, theURDFor even the mesh file of the modular joint.- Mender (OTA client) runs inside the H-ROS SoM. When an update is launched, the client downloads this version and it gets installed and available when the device is rebooted.

- Onboard Modular Gripper: Undisclosed.

- Onboard Industrial PC:

- MoveIt! motion planning framework.

- Manufacturing process control applications.

- Robot teleoperation utilities.

- ROS 1 / ROS 2 bridges: These bridges are needed to be able to run MoveIT! which is not yet ported to ROS 2. Right now there is an effort in the community to port this tool to ROS 2.

Software dependencies

In this section all the relevant third party software dependencies used within the different components among the scope of this threat model are listed.

- Linux OS / Kernel

- ROS 2 core libraries

- H-ROS core libraries and packages

- ROS 2 system dependencies as defined by rosdep:

- RMW implementation: In the H-ROS API there is the chance to choose the DDS implementation.

- The threat model describes attacks with the security enabled and disabled. If the security is enabled, the security plugins are assumed to be configured and enabled.

See Mara ROS 2 Tutorials to find more details about software dependencies.

External Actors

All the actors interacting with the robotic system are here gathered.

- End users

- Robotics user: Interacts with the robot for performing work labour tasks.

- Robotics operator: Performs maintenance tasks on the robot and integrates the robot with the industrial network.

- Robotics researcher: Develops new applications and algorithms for the robot.

- Manufacturer

- Robotics engineer: Develops new updates and features for the robot itself. Performs in-site robot setup and maintainance tasks.

Robot Data assets

In this section all the assets storing information within the system are displayed.

- ROS 2 network (topic, actions, services information)

- Private Data

- Logging messages

- Restricted Data

- Robot Speed and Orientation. Some understanding of the current robot task may be reconstructed from those messages.

- Logging messages

- Private Data

- H-ROS API

- Configuration and status data of hardware.

- Modules (joints and gripper)

- ROS logs on each module. Private data, metrics and configuration information that could lead to GDPR issues or disclosure of current robot tasks.

- Module embedded software (drivers, operating system, configuration, etc.)

- Secret data. Intellectual Property (IP) theft is a critical issue here.

- Credentials. CI/CD, enterprise assets, SROS certificates.

- ORC Industrial PC

- Public information. Motion planning algorithms for driving the robot (MoveIt! motion planning framework).

- Configuration data. Private information with safety implications. Includes configuration for managing the robot.

- Programmed routines. Private information with safety implications.

- Credentials. CI/CD, enterprise assets, SROS certificates.

- CI/CD and OTA subsystem

- Configuration data.

- Credentials

- Firmware files and source code. Intellectual property, both end-user and manufacturer.

- Robot Data

- System and ROS logs are stored on each joint module’s filesystem.

- Robot system is subject to physical attacks (physically removing the disk from the robot to read its data).

- Cloud Data

- Different versions of the software are stored on the OTA server.

- Secret Management

- DDS / ROS Topics

- If SROS is enabled, private keys are stored on the local filesystem of each module.

- DDS / ROS Topics

- Mender OTA

- Mender OTA client certificates are stored on the robot file system.

Use case scenarios

As described above, the application considered is a MARA modular robot operating on an industrial

environment while performing a pick & place activity.

For this use case all possible external actor have been included.

The actions they can perform on the robotic system have been limited to the following ones:

- End-Users: From the End-user perspective, the functions considered are the ones needed for

a factory line to work.

Among this functions, there are the ones keeping the robot working and being productive.

On an industrial environment, this group of external actors are the ones making use of the robot

on their facilities.

- Robotics Researcher: Development, testing and validation

- A research engineer develops a pick and place task with the robot.

They may:

- Restart the robot

- Restart the ROS graph

- Physically interact with the robot

- Receive software updates from OTA

- Check for updates

- Configure ORC control functionalities

- A research engineer develops a pick and place task with the robot.

They may:

- Robotic User: Collaborative tasks

- Start the robot

- Control the robot

- Work alongside the robot

- Robotics Operator: Automation of industrial tasks

- An industrial operator uses the robot in a factory.

They may:

- Start the robot

- Control the robot

- Configure the robot

- Include the robot into the industrial network

- Check for updates

- Configure and interact with the ORC

- An industrial operator uses the robot in a factory.

They may:

- Robotics Researcher: Development, testing and validation

- Manufacturer: From the manufacturer perspective, the application considered is the

development of the MARA robotic platform, using an automated system for deployment of updates,

following a CI/CD system.

These are the actors who create and maintain the robotic system itself.

- Robotics Engineer: Development, testing and validation

- Development of new functionality and improvements for the MARA robot.

- Develop new software for the H-ROS SoM

- Update OS and system libraries

- Update ROS 2 subsystem and control nodes

- Deployment of new updates to the robots and management of the fleet

- In-Place robot maintenance

- Development of new functionality and improvements for the MARA robot.

- Robotics Engineer: Development, testing and validation

Entry points

The following section outlines the possible entry points an attacker could use as attack vectors to render the MARA vulnerable.

- Physical Channels

- Exposed debug ports.

- Internal field bus.

- Hidden development test points.

- Communication Channels

- DDS / ROS 2 Topics

- Topics can be listened or written to by any actor:

- Connected to a network where DDS packets are routed to.

- Have necessary permissions (read / write) if SROS is enabled.

- When SROS is enabled, attackers may try to compromise the CA authority or the private keys to generate or intercept private keys as well as emitting malicious certificates to allow spoofing.

- Topics can be listened or written to by any actor:

- H-ROS API

- H-ROS API access is possible to anyone on the same LAN or WAN (if port forwarding is enabled).

- When authentication (in the H-ROS API) is enabled, attackers may try to vulnerate it.

- Mender OTA

- Updates are pushed from the server.

- Updates could be intercepted and modified before reaching the robot.

- DDS / ROS 2 Topics

- Deployed Software

- ROS nodes running on hardware are compiled by the manufacturer and deployed directly. An attacker may tamper the software running in the hardware by compromising the OTA services.

- An attacker compromising the developer workstation could introduce a vulnerability in a binary which would then be deployed to the robot.

- MoveIt! motion planning library may contain exploitable vulnerabilities.

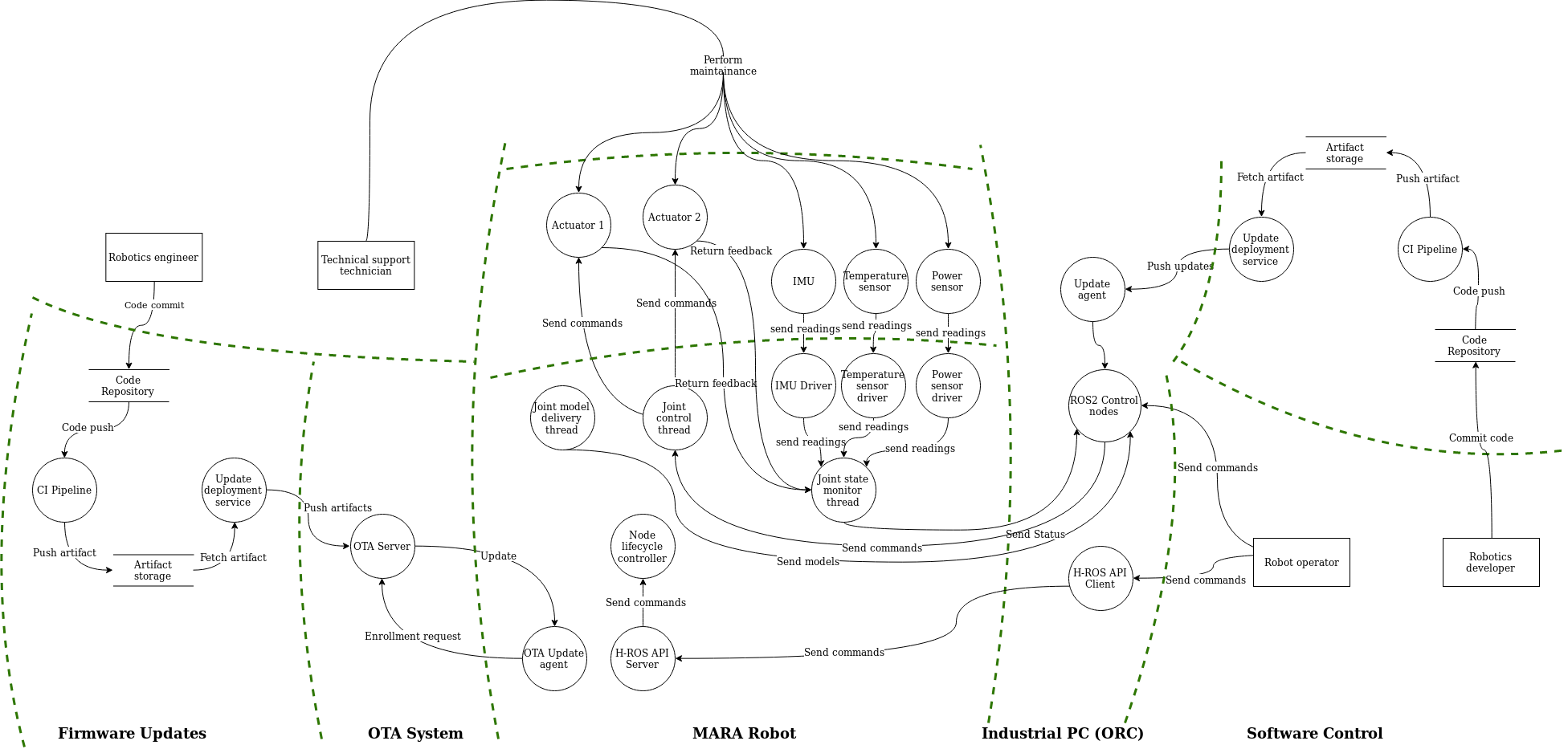

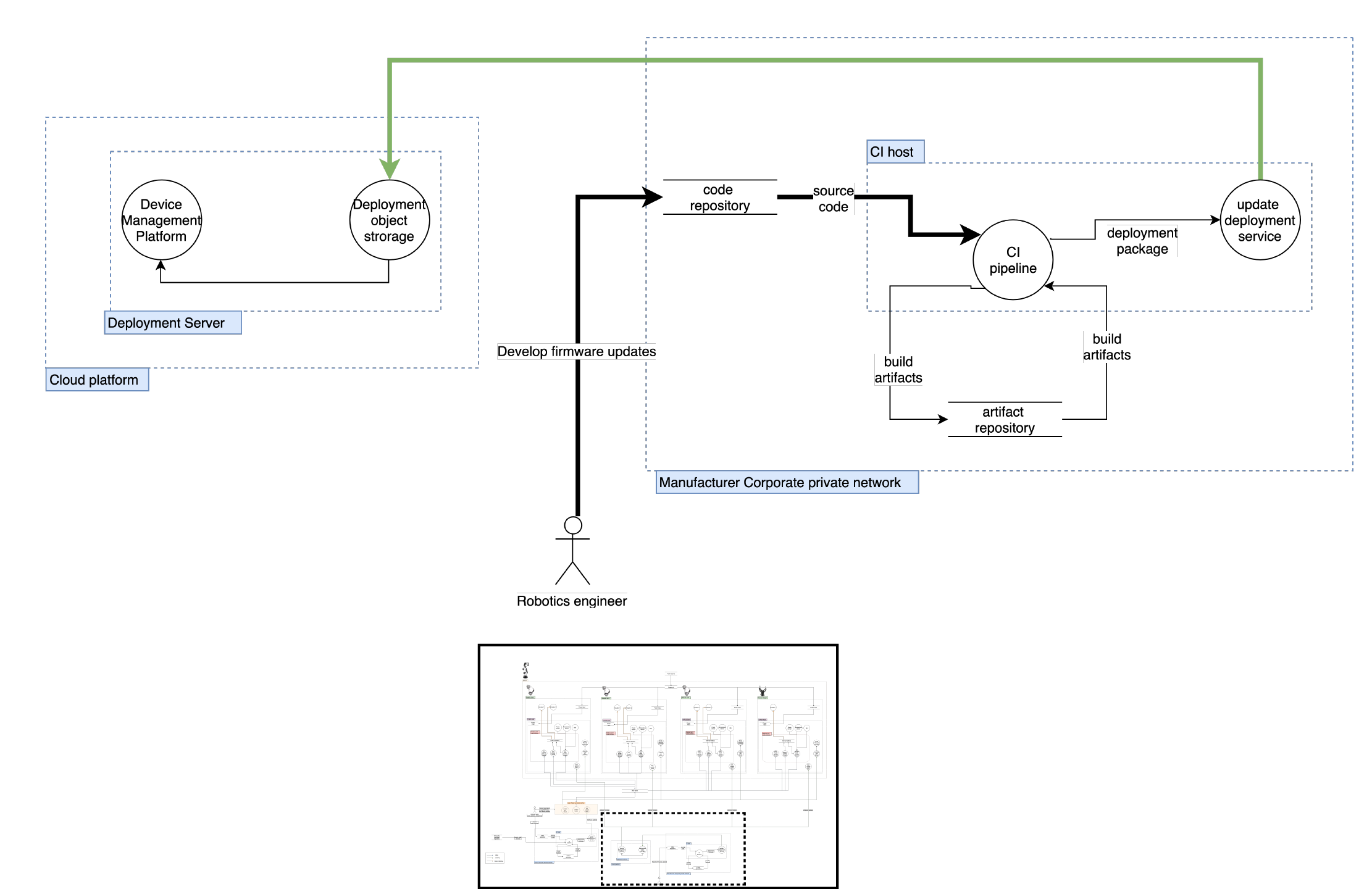

Trust Boundaries for MARA in pick & place application

The following content will apply the threat model to an industrial robot on its environment. The objective on this threat analysis is to identify the attack vectors for the MARA robotic platform. Those attack vectors will be identified and clasified depending on the risk and services implied. On the next sections MARA’s components and risks will be detailed thoroughly using the threat model above, based on STRIDE and DREAD.

The diagram below illustrates MARA’s application with different trust zones (trust boundaries showed with dashed green lines). The number and scope of trust zones is depending on the infrastructure behind.

Diagram Source

(edited with Draw.io)

Diagram Source

(edited with Draw.io)

The trust zones ilustrated above are the following:

- Firmware Updates: This zone is where the manufacturer develops the different firmware versions for each robot.

- OTA System: This zone is where the firmwares are stored for the robots to download.

- MARA Robot: All the robots’ componentents and internal comunications are gathered in this zone.

- Industrial PC (ORC): The industrial PC itself is been considered a zone itself because it manages the software the end-user develops and sends it to the robot.

- Software control: This zone is where the end user develops software for the robot where the tasks to be performed are defined.

Threat Model

Each generic threat described in the main threat table can be instantiated on the MARA.

This table indicates which of MARA’s particular assets and entry points are impacted by each threat. A check sign (✓) means impacted while a cross sign (✘) means not impacted. The “SROS Enabled?” column explicitly states out whether using SROS would mitigate the threat or not. A check sign (✓) means that the threat could be exploited while SROS is enabled while a cross sign (✘) means that the threat requires SROS to be disabled to be applicable.

| Threat | MARA Assets | Entry Points | SROS Enabled? | Attack | Mitigation | Mitigation Result (redesign / transfer / avoid / accept) | Additional Notes / Open Questions | |||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Human Assets | Robot App. | ROS 2 API (DDS) | Manufacturer CI/CD | End-user CI/CD | H-ROS API | OTA | Physical | |||||||

| Embedded / Software / Communication / Inter-Component Communication | ||||||||||||||

| An attacker spoofs a software component identity. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | Without SROS any node may have any name so spoofing is trivial. |

|

Mitigating risk requires implementation of SROS on MARA. | No verification of components. An attacker could connect a fake joint directly. Direct access to the system is granted (No NAC). | |

| ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ | ✘/✓ | An attacker deploys a malicious node which is not enabling DDS Security Extension and spoofs the `joy_node` forcing the robot to stop. |

|

Risk is mitigated | |||

| ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ | ✓ | An attacker steals node credentials and spoofs the joint node forcing the robot to stop. |

|

Mitigation risk requires additional work. |

|

||

| An attacker intercepts and alters a message. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | Without SROS an attacker can modify `/goal_axis` or `trajectory_axis` messages sent through a network connection to e.g. stop the robot. |

|

Risk is reduced if SROS is used. | ||

| An attacker writes to a communication channel without authorization. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | Without SROS, any node can publish to any topic. |

|

|||

| An attacker listens to a communication channel without authorization. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | Without SROS: any node can listen to any topic. |

|

Risk is reduced if SROS is used. | ||

| ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘/✓ | DDS participants are enumerated and fingerprinted to look for potential vulnerabilities. |

|

Risk is mitigated if DDS-Security is configured appropriately. | |||

| An attacker prevents a communication channel from being usable. | ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | Without SROS: any node can ""spam"" any other component. |

|

Risk may be reduced when using SROS. | ||

| ✓ | ✓ | ✓ | ✘ | ✘ | ✘ | ✘ | ✘ | ✘ | A node can ""spam"" another node it is allowed to communicate with. |

|

Mitigating risk requires additional work. | How to enforce when nodes are malicious? Observe and kill? | ||

| Embedded / Software / Communication / Remote Application Interface | ||||||||||||||

| An attacker gains unauthenticated access to the remote application interface. | ✓ | ✓ | ✘ | ✘ | ✘ | ✓ | ✘ | ✘ | ✘/✓ | An attacker connects to the H-ROS API in an unauthenticated way. Reads robot configuration and alters configuration values. |

|

Risk is mitigated. | ||